Contents

Prepare Your Network for the Cloud and Beyond

The operational efficiencies, ease of implementation, and flexible purchasing commitment provided by cloud computing are convincing companies to make the change.

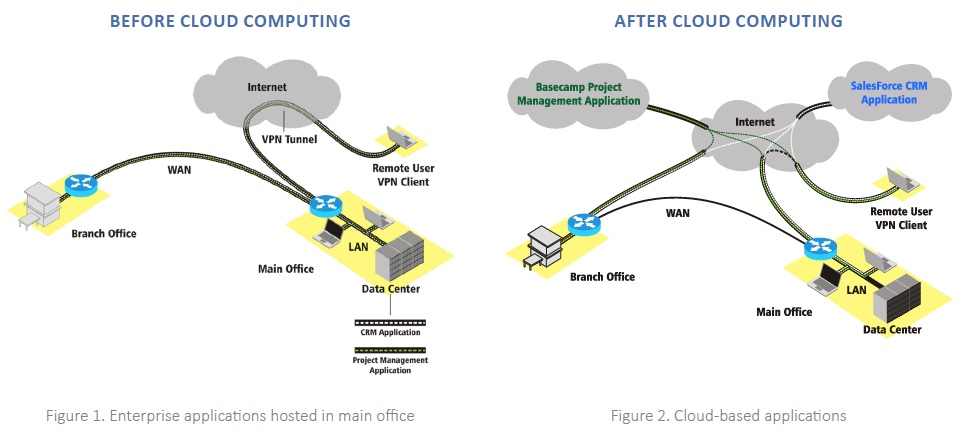

Cloud computing services are heavily focused on applications, but sometimes little thought goes into how a shift to cloud services will impact an organization’s network infrastructure.

Switching to a cloud consumption model represents a significant change in the characteristics of the network traffic. If not approached correctly this could mean degradation in the quality of the user experience.

Most cloud services use standard Internet protocols and applications as the interface for accessing their services. This means, any Quality of Service (QoS) policies you have in place, will by default, treat these applications as casual web traffic and assign them to a Best Effort class.

This white paper anticipates these changes by covering:

- the different types of cloud services and their implications for WAN and Internet architectures

- tips on preparing your network with QoS policies to support cloud services

- communication strategies for project planning and implementation

LiveAction can help you implement the proper QoS for your cloud services for optimal network and application performance. Learn how by downloading our white paper.

INTRODUCTION

While there has been an extreme amount of hype surrounding cloud computing, there is a good chance your company is using or will use an important cloud-computing business service in the near future. The operational efficiencies, ease of implementation, and flexible purchasing commitment provided by cloud computing are compelling enough for companies to use in multiple facets of their business.

Since cloud computing services (cloud services) are heavily focused on applications, oftentimes little thought goes into the impact a shift to cloud services has on an organization’s network infrastructure. Switching to a cloud consumption model represents a significant shift in the characteristics of the traffic a network will need to deal with, but the primary concern should always be how these changes will impact the quality of user experience. Answering this question is especially important since most cloud services use standard Internet protocols and applications as the interface for accessing their services. This means, any Quality of Service (QoS) policies you have in place, will by default, treat these applications as casual web traffic and assign them to a Best Effort class.

This white paper will clarify the different types of cloud services, explain implications cloud services have for WAN and Internet architectures, and provide tips on preparing your network with QoS policies to support cloud services. If you’re a system administrator involved in deploying cloud services, this white paper will help you understand the issues faced by the network engineers in your company and how to better communicate with them and line of business (LOB) stakeholders when project planning and implementation occur.

CLOUD SERVICES OVERVIEW

In the simplest terms, cloud computing is the system that provides utility computing in a scalable and easily consumable form via the Internet. While the concept of utility computing is not new, only the recent maturity of technologies such as high-powered multi-core CPUs, virtualization, and fast and ubiquitous Internet access have transformed the adoption of cloud computing into services that provide real business value.

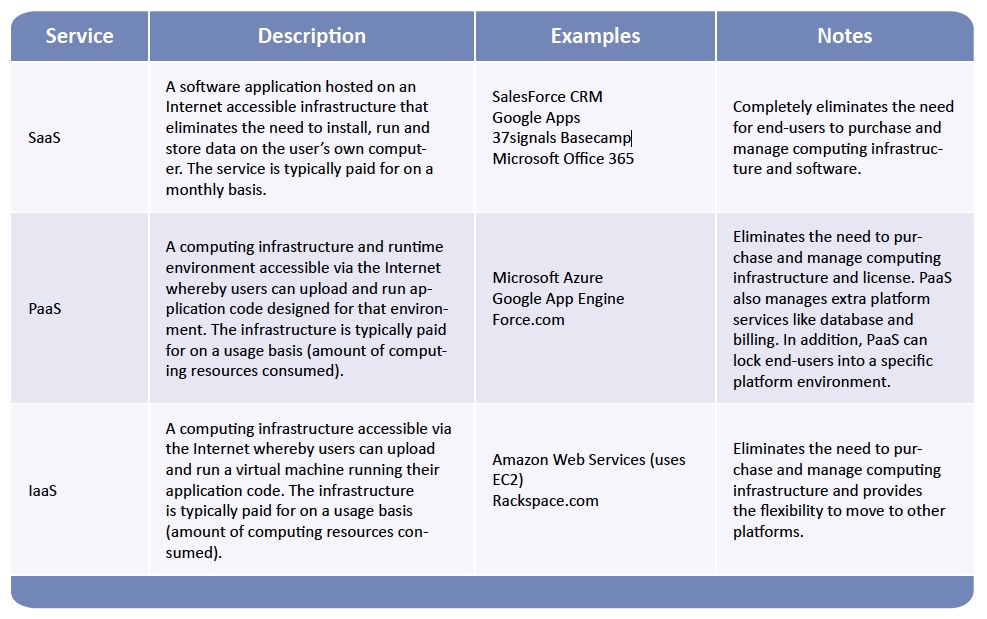

While cloud services are founded on the concept of utility computing, they can be packaged in many different ways, and in some forms, they do not work with a utility computing pricing model. There is still some flux in cloud service models and offerings, but presently the industry has settled on three primary cloud services models: Software as a Service (SaaS), Platform as a Service (PaaS), and Infrastructure as a Service (IaaS). The following table summarizes the different cloud services:

QOS BEST PRACTICES FOR CLOUD TRAFFIC

Just like any other IT project, before making any changes to your network and application infrastructure, it is important to plan ahead. Here are the major steps you need to follow when planning for network QoS for cloud services:

- Communicate with the other IT teams: Especially in large companies there will be multiple teams involved in rolling out cloud services. If you’re putting unified communications in the cloud, you may be working with a different group than if you were implementing Google Apps for Business. In either case, hopefully those teams are reaching out to you. If they aren’t, don’t hesitate to interject yourself in the conversation–mention that you’d like to discuss what needs to be done before the rollout rather than try to fix things afterwards.

- Understand the traffic: Cloud services will cause a shift in traffic and usage patterns by adding traffic to and from the cloud service provider. If you’re replacing an internal application with a cloud application, that legacy traffic will disappear, changing traffic patterns to and from your data centers. You should develop a good understanding of your current conditions by baselining your network traffic. You can learn more about your cloud service traffic by understanding the services involved (see Classifying Cloud Traffic) and by tracking live traffic during a pilot rollout.

- Develop initial design: Put together a rough design of how you want to implement QoS for cloud services into your current architecture. Cloud service traffic fits best in QoS models with 4 or more classes. If baselining indicates that you need to add bandwidth or change the network architecture, incorporate this into the plan.

- Review your plan: Present your plan to other IT teams and key stakeholders in your company. Business and technology leaders will want to know what you are changing and how it might impact services already in place. If the current network architecture needs updating (upgraded WAN services, new routers, or even a different network design), you’ll also need to make that justification.

- Design the details: Most cloud service traffic will use existing Internet protocols, so you should know the IP addresses of the services you will be connecting to, in order to distinguish them from casual Internet traffic.

- Implement and test: Try your policies in the lab or as a pilot deployment. This is where you can make sure everything is running correctly and fine tune bandwidth allocations or reassign protocols to different classes if necessary.

- Production roll-out: Implement the policies in a production environment and monitor and fine tune as you go. This step is similar to the pilot project or lab testing, but it is occurring on the live network and on a larger scale.

Discussion and Planning

Before cloud services are deployed, some discussion between the application teams and network teams should occur. This will help proactively address network performance problems that may impact the end-user experience with cloud and other services already running on the network.

Most cloud services are delivered via the Internet using standard Internet client applications. This is appealing for companies since Internet access gives users the flexibility to use the service from virtually anywhere. The downside is that without adjusting your QoS policies, standard Internet protocols such as HTTP and HTTPs will be treated the same as casual Web browsing. Whether or not the anticipated load on the network will have an impact on the end user is best determined in the context of your current network design and the services running across it.

Some specialized services may use dedicated connections directly to the service provider if the bandwidth and quality requirements dictate it. Examples of specialized services are healthcare applications, unified communications, and offsite backup hosted in the cloud. In these cases, you should consider your WAN architecture and the impact cloud services used by your branch offices will have on the network.

Here’s a list of issues and questions that should be addressed and considered during the discussion phase:

- What type of service is being used? – SaaS, PaaS, IaaS

- What are the typical activities associated with the service? – Web GUI interaction, file uploads/downloads, console session interaction

- What is the nature of the cloud service traffic? – Typical bandwidth requirements, burstiness of the traffic, real-time vs. non-real-time, business importance of the traffic

- What are the usage patterns? – Number of users, frequency of usage, usage vs. time of day, locations of use (branch/HQ/remote)

- How is Internet access provided for your branch offices? – Through the HQ vs. dedicated Internet access at each branch

- Is the cloud service replacing a service that is currently hosted in-house? – Internal CRM vs. SalesForce CRM

Investigation and Design

Before proceeding to the design stage, baseline what’s happening today on your network. You can use a flow monitoring and reporting tool. If you have QoS policies in place, use a tool that allows you to monitor QoS class performance. Using this information you will want to determine the typical utilization of your current network applications and find and fix any

trouble spots you encounter. The data may even indicate that further network infrastructure changes are needed to support your new cloud services.

Once you understand where things are today, you’ll want to know a little more about the type of protocols and traffic characteristics used by the cloud services.

- SaaS services like Microsoft Office 365, Google Docs and 37signals Basecamp will typically have a lot of light HTTP and HTTPS traffic with occasional large file transfers if documents are uploaded or downloaded.

- PaaS services will likely have minimal impact during application development and post deployment. There may be windows of high network use when applications are uploaded during testing phase or revised after deployment.

- IaaS traffic will vary by the business usage and can be characterized by large file transfers using protocols like SFTP. For example, if storage services like Amazon S3 are used for data back-up or for financial or scientific data storage, this will likely result in regular transfers of large amounts of data.

With this information you can put together a rough design, share it with other IT teams and seek approval from your directors and executives. With the plan approved, it’s time to work out the details of your QoS policies.

The primary QoS mechanisms that will be put into use are:

- Identification and classification

- Marking and queuing

- Traffic shaping for WAN links

Classifying Cloud Traffic

Identifying and classifying your cloud traffic is the first step to creating policies. You can use this step during your pilot and production roll-outs to make sure you are properly classifying the traffic before taking any other QoS actions. Also, by using a QoS monitoring tool with a monitoring policy, you will get a good idea of the traffic levels you can expect from your cloud services.

For identifying and classifying cloud services, a couple of approaches can be taken. You can directly use the IP address and/ or port numbers used to access the service and create an access control list (ACL). If you need to get the IP addresses for your cloud applications, you can talk to your cloud service provider, or use a flow-monitoring tool to discover them during the course of operation.

An easier method is also recommended. You can take advantage of Cisco’s advanced NBAR2 classification engine that can recognize cloud-based applications using deep packet inspection. Using this method you would only need to select the cloud-based application name and then manage the application with marking or queuing policies. Cisco updates their NBAR2 classifications regularly—you can verify which cloud-based applications are supported here.

Marking and Queuing for Cloud Traffic

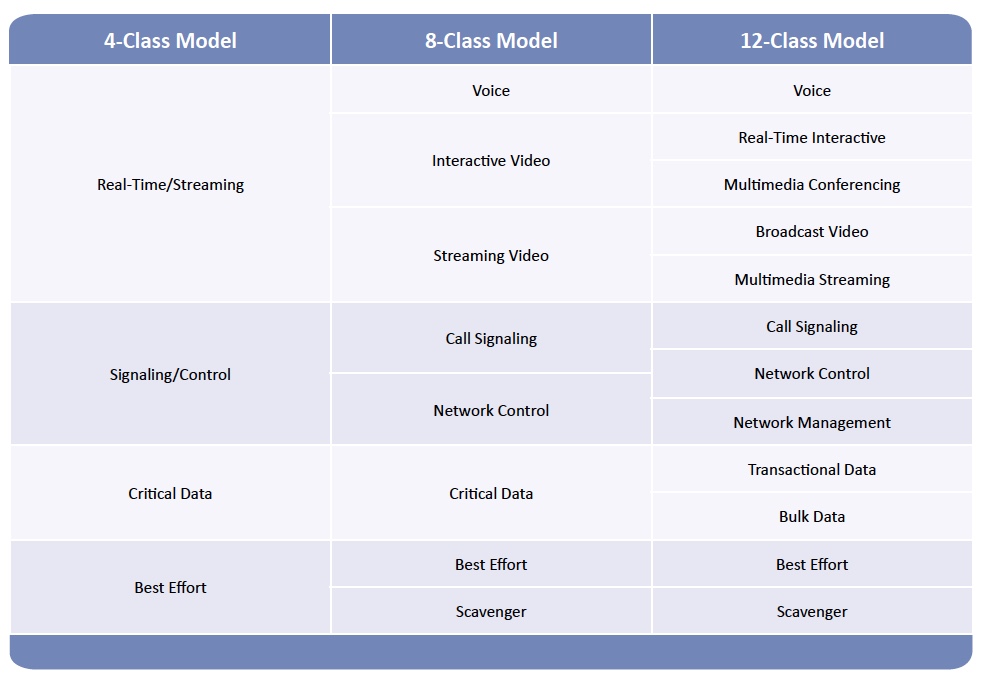

Once traffic is classified, the appropriate marking action can be taken. The marking action will depend on the class model you are using, as well as the QoS treatment you intend to give the traffic. As an example, let’s consider three of Cisco’s class models:

In the 4- and 8-class models, you will likely map cloud service traffic to the Critical Data or possibly Best Effort class. In the more granular models such as the 11- or 12-class, you have the choice of using the Transactional Data, Bulk Data or Best Effort classes. Transactional Data will include the services that involve user interactions where timely responses are expected. These are typically the CRM, ERP and similar business applications. Bulk Data will be used for high-throughput traffic involving no user interaction such as backups and uploads to cloud storage services (i.e., SFTP and FTP).

When finalizing marking and queuing for your QoS policies, keep these Cisco best practices in mind:

- Mark packets as close to the source of the traffic as possible (i.e. the access switch connected to the device). If this is not practical, packets can be marked at other points in the network where congestion is likely to occur.

- The majority of traffic will be classified as default, so enough bandwidth should be provided to support this type of traffic.

- Real-time traffic should use priority queues and be assigned adequate bandwidth. However, you should limit the overall priority queue to 33% of the available bandwidth to prevent the starvation of other application traffic.

- The total bandwidth allocation for classes other than default should not exceed 75% of a link’s capacity to allow for Layer 2 overhead and Best Effort traffic.

- Recreational or Scavenger traffic should be policed as close to the source as possible to prevent unnecessary bandwidth usage if it exceeds a certain threshold.

Marking close to the source will also be needed on the Internet traffic coming into your WAN edge from the cloud service provider (downstream cloud service Internet traffic).

Additional WAN Network Considerations

At the branch office router and HQ WAN aggregator, there will likely be a couple of additional factors you need to take into consideration:

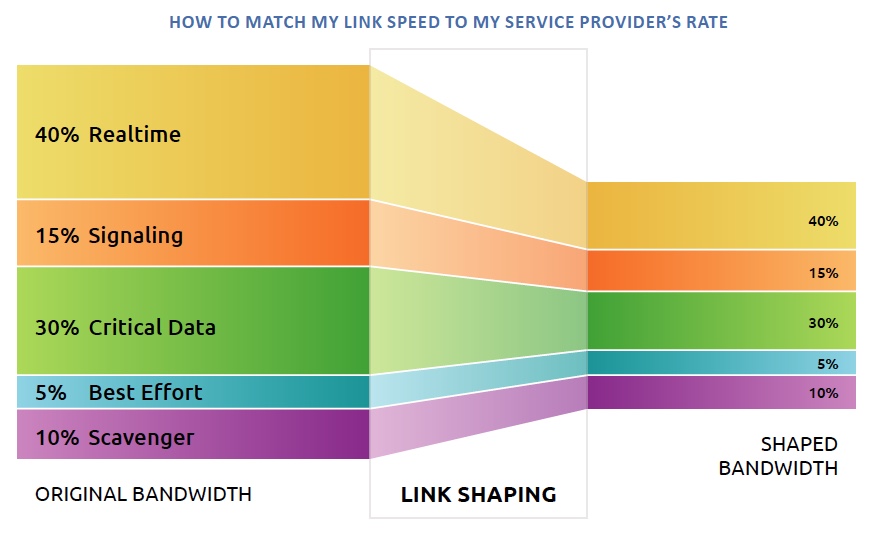

- Speed mismatches between the LAN and WAN/Internet connections

- QoS treatment for traffic traversing a service provider network

Almost without exception, there will be a speed mismatch when traffic is traveling from an interface on the internal side of your aggregator router to one connected to the WAN or Internet. One method of handling this is to use a hierarchical policy that nests a queuing policy within a shaping policy. This prioritizes traffic within a sub-line rate shaping policy to match the contracted WAN or Internet service capacity. Remember, your capacity is determined by the terms of the services you have contracted from your provider. Just because you have a 100Mbps link to the provider does not mean that you have a 100Mbps contracted rate.

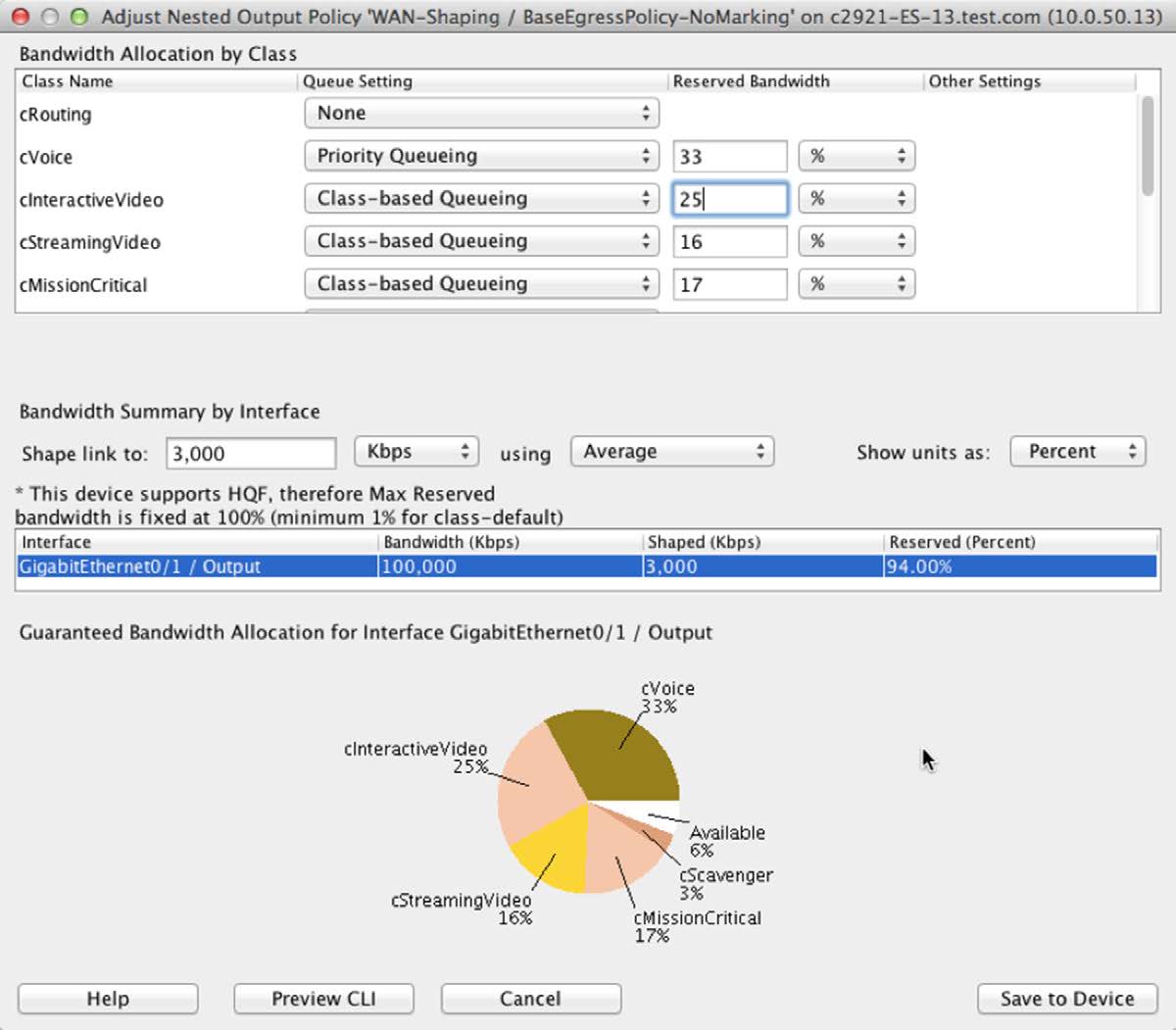

Figure 3. Using hierarchical policies and link shaping to accommodate speed mismatches

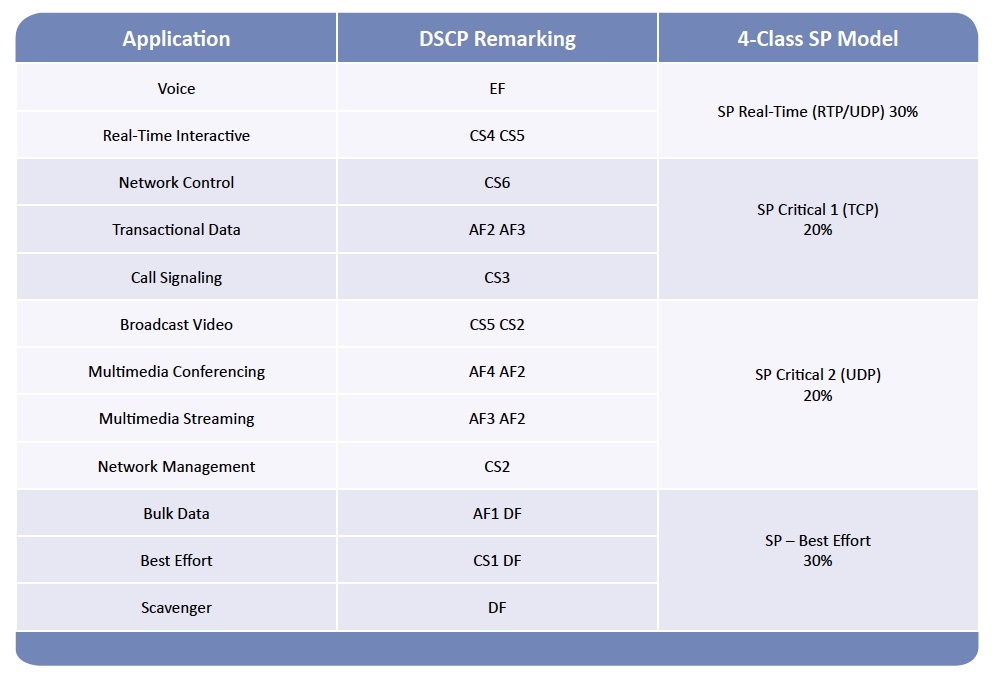

The second consideration is if you have an MPLS service with multiple Class of Service (CoS) levels. These typically come in flavors such as 3- or 4-class models. The table below outlines Cisco best practices for remarking and mapping a 12-class model to a 4-class MPLS service. As the table below shows, Transactional Data is mapped to SP-Critical 1 and Bulk Data is lumped into SP-Best Effort.

QOS IMPLEMENTATION SUMMARY

The guidelines in this section may or may not be applicable given the needs of your business, the architecture of your LAN and WAN, and the traffic types running over it. Make sure to consider all the factors that are unique to your business when designing your QoS policies.

Tools for a Successful Cloud Services Implementation

When deploying QoS for cloud services, or even investigating and troubleshooting performance issues with cloud services, having the right tools will help accelerate the implementation and troubleshooting of cloud services and provide a better end-user experience.

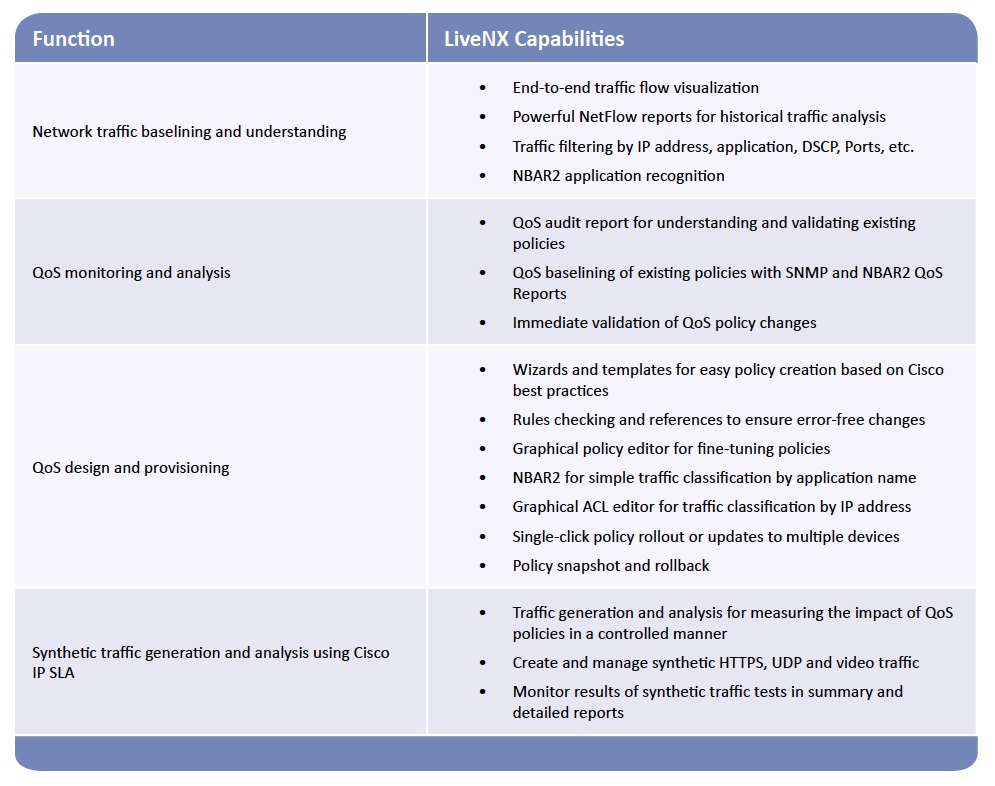

LiveNX, LiveAction’s application-aware network performance management software with QoS control, is designed to simplify network management. With innovative and unique QoS capabilities, it combines several functions for implementing QoS for cloud services into a cohesive interface. The following table summarizes tasks required for implementing cloud services and the LiveNX capabilities that will help:

Network Traffic Baselining and Understanding

LiveNX has several analysis tools available for the information gathering that you will need in your cloud service implementation.

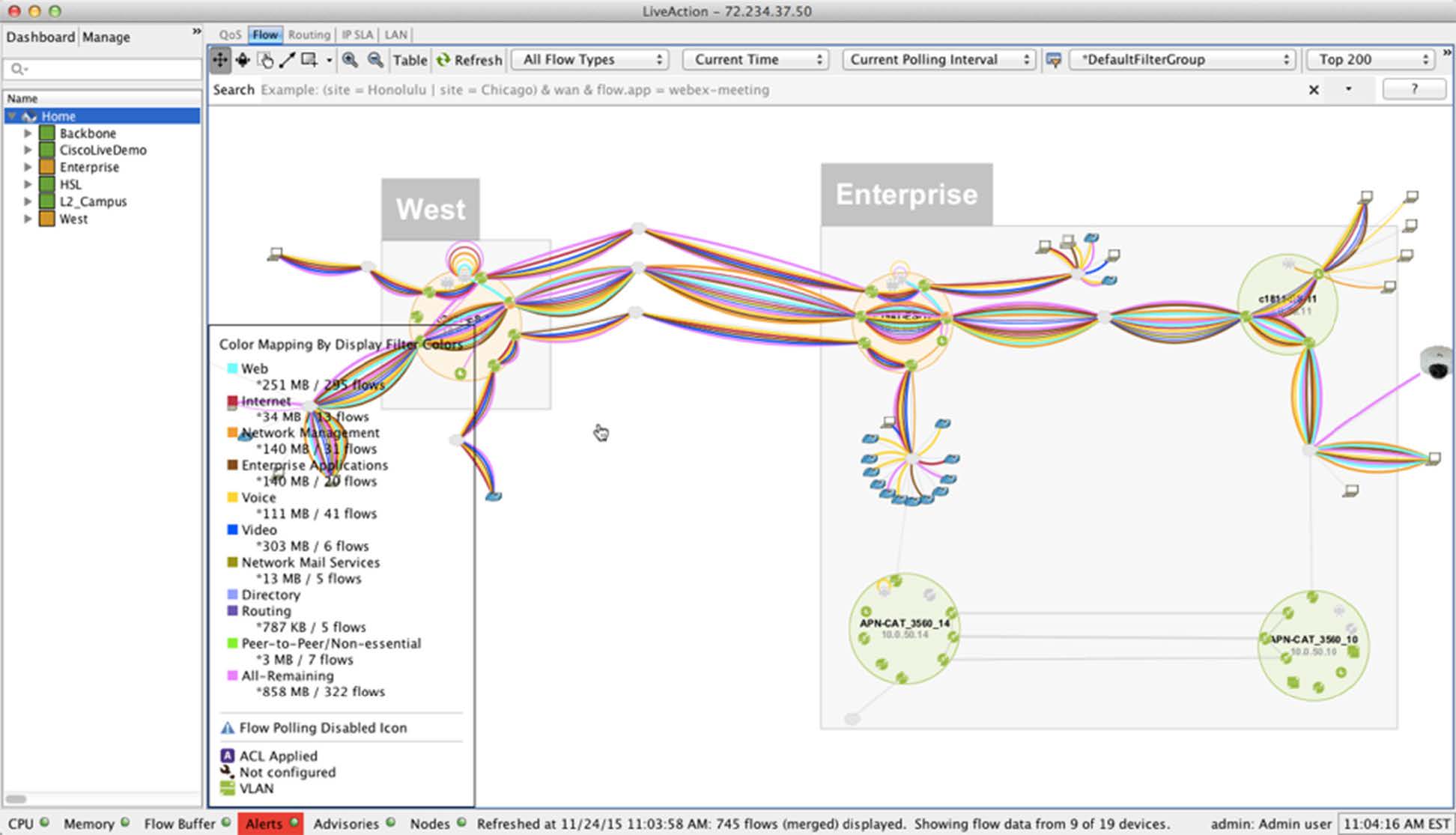

LiveNX’s end-to-end flow visualization helps with identifying current traffic patterns and gives you the power to troubleshoot any potential issues that need to be resolved.

Figure 4. Viewing network-wide traffic flows using LiveNX’s topology screen

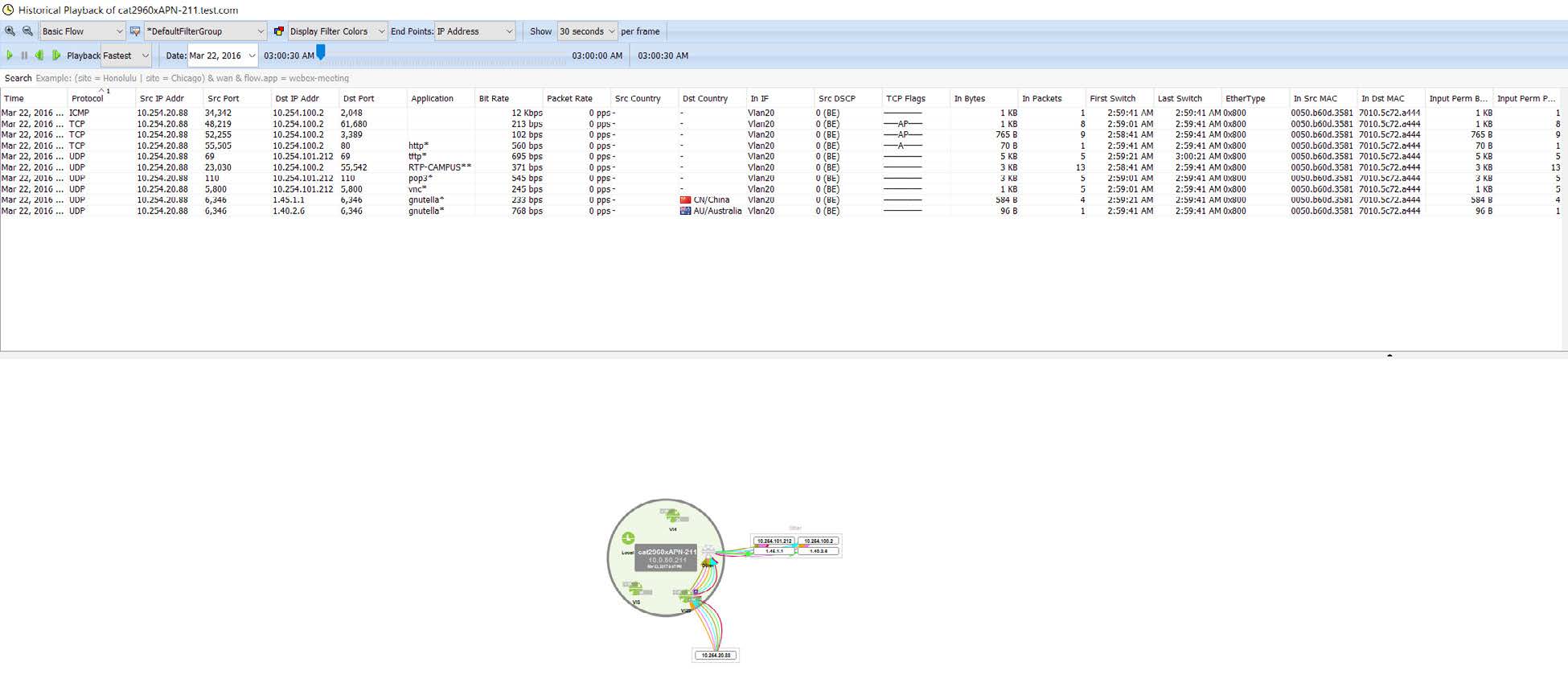

LiveNX’s playback allows a DVR like approach to identify which traffic may need to be addressed with your QoS policy implementation.

Figure 5. Playback LiveNX’s historical flow data

Rich reports will give current utilization for the entire network, or down to a per-device and per-interface for selected time periods for Applications, Top-Talkers, DSCP markings, etc.

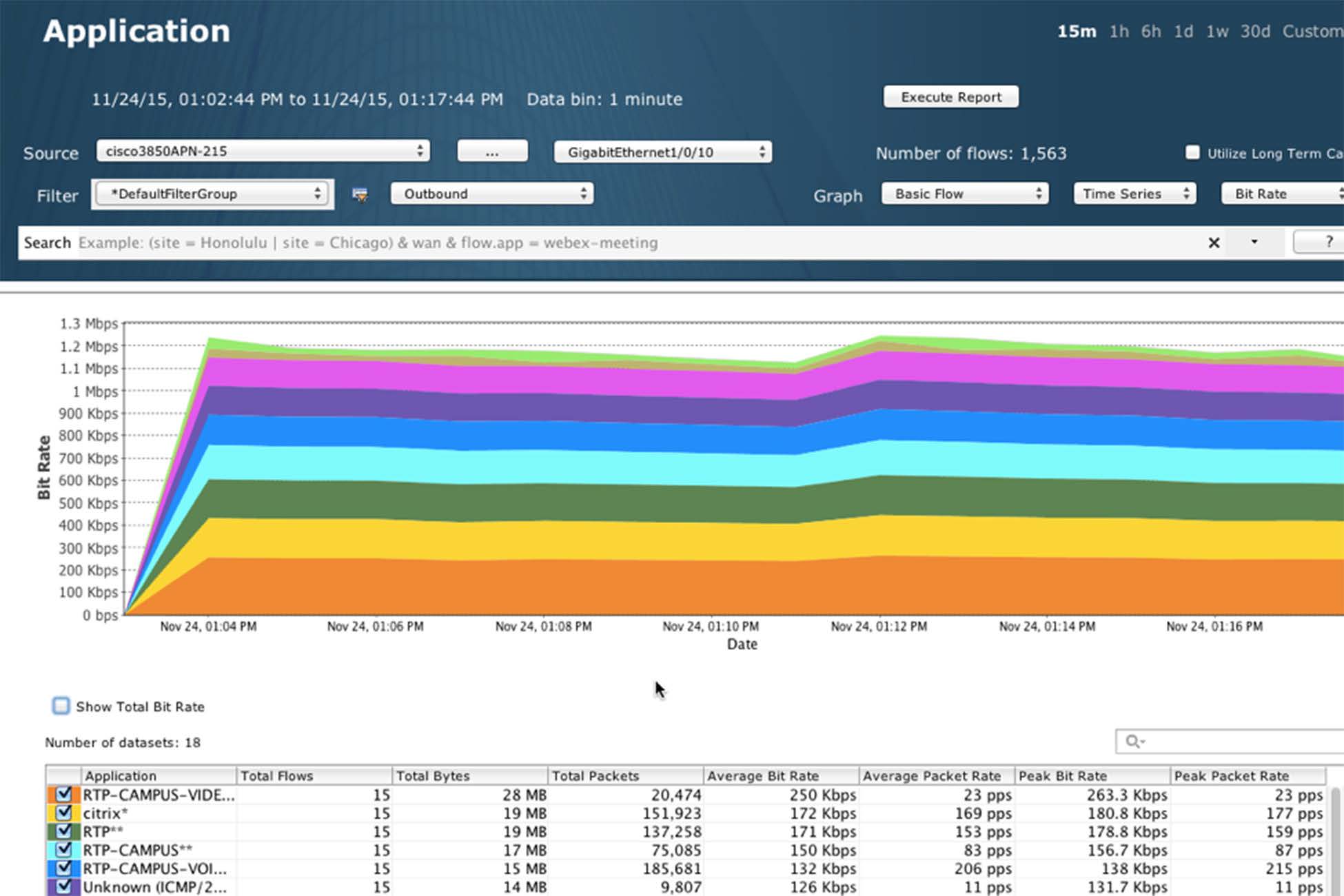

Figure 6. Using LiveNX’s historical flow data to verify BW utilization by application

QoS Design and Provisioning

LiveNX greatly simplifies QoS policy creation with expert capability in the form of GUI wizards, templates, and interactive screens for designing, deploying, and adjusting QoS policies.

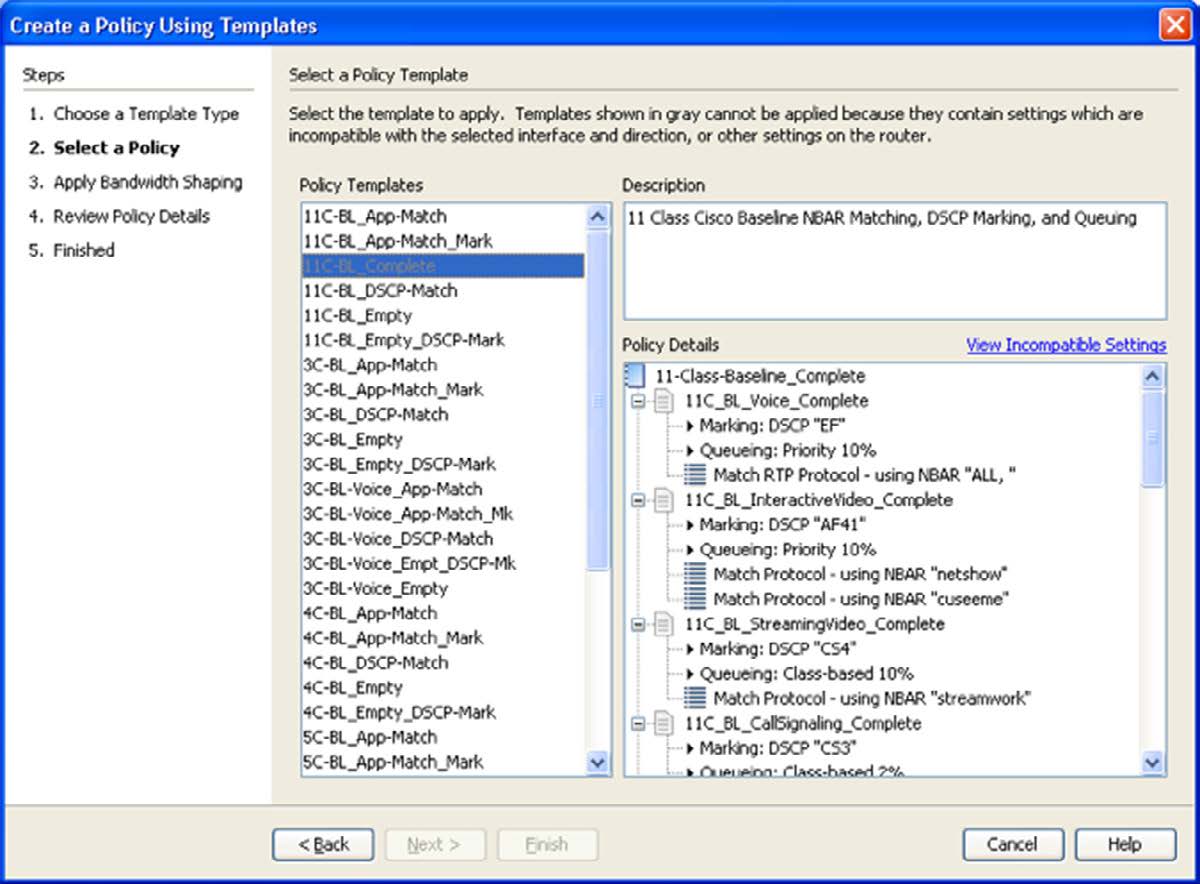

Policies can be created using built-in wizards based on Cisco design recommendations.

Figure 7. Creating a QoS policy using LiveNX’s template wizard

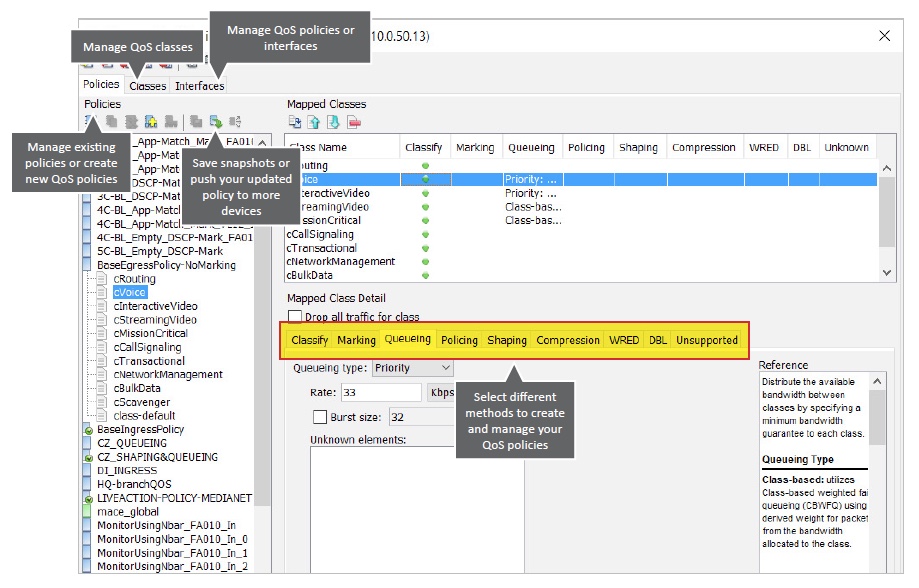

Policies can also be customized and managed with a comprehensive QoS policy editor/manager.

Figure 8. Using LiveNX’s QoS editor and manager to adjust policies

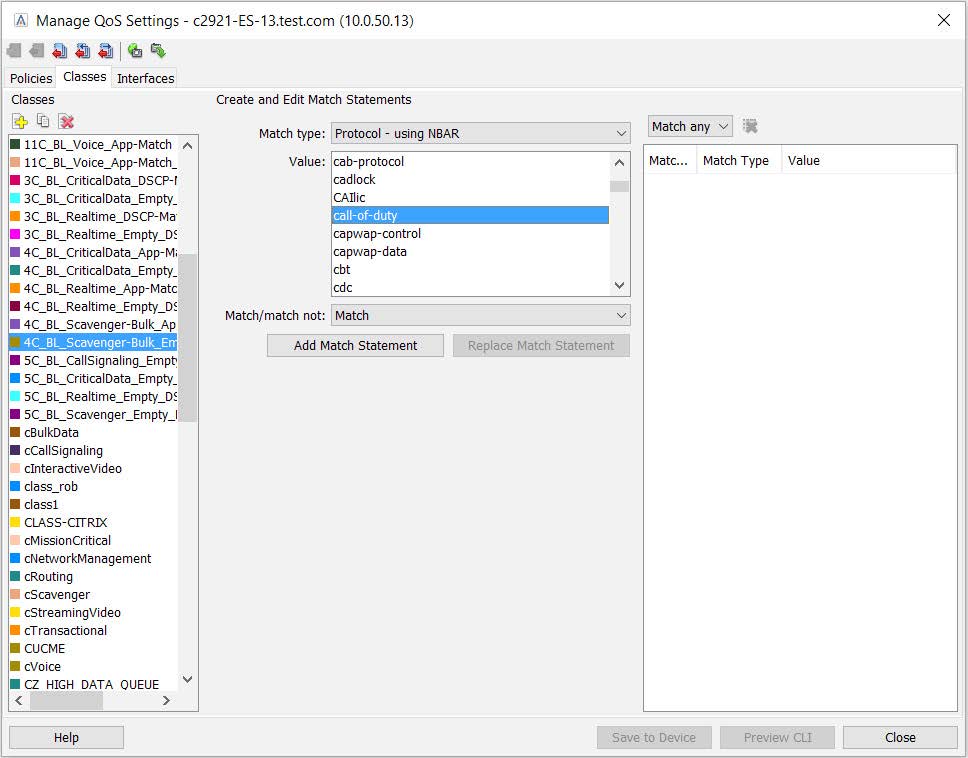

Figure 9. Using LiveNX’s QoS policy editor and NBAR2 to manage cloud-based applications based on advanced classification techniques

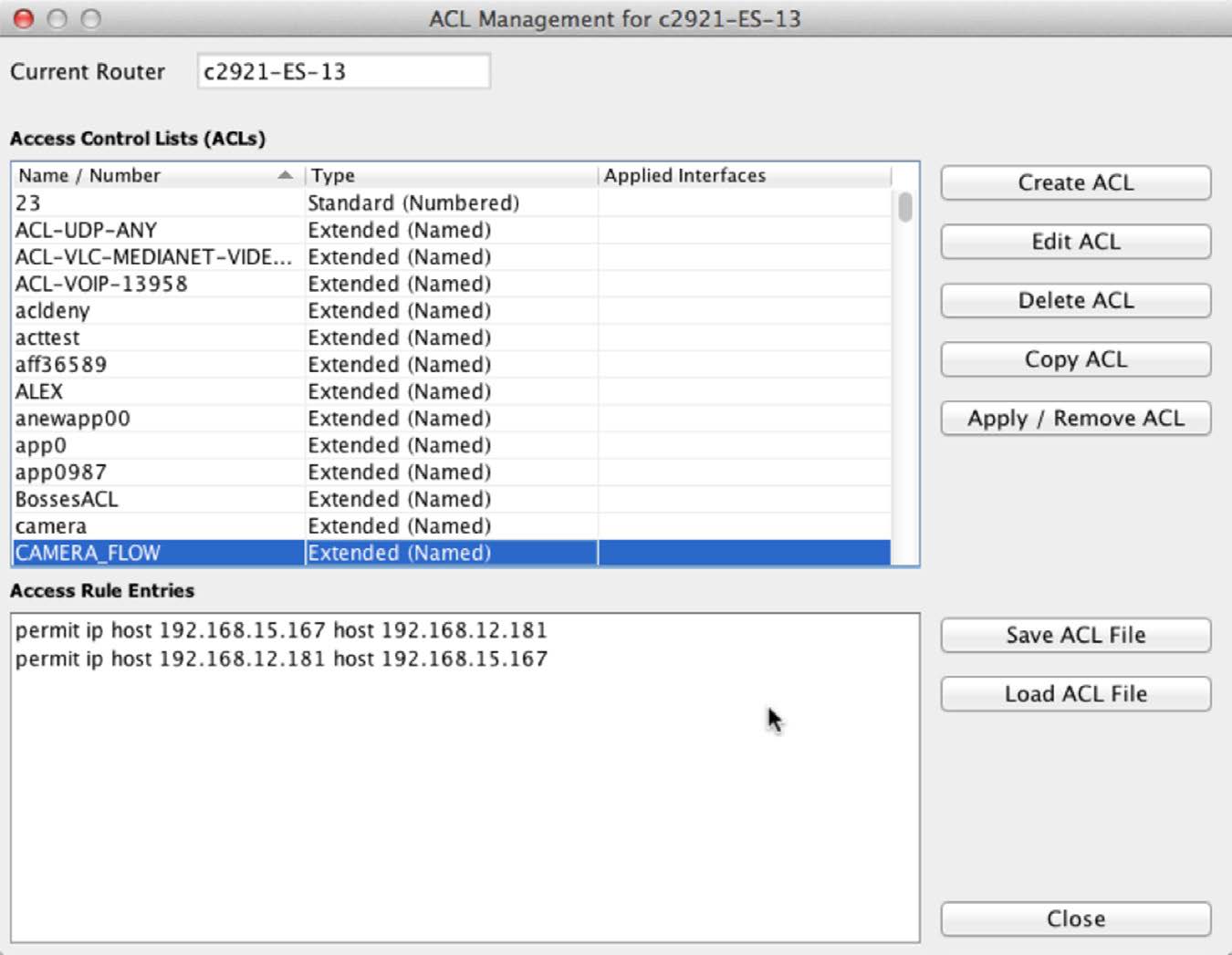

ACLs can be created and edited using LiveNX’s built in ACL editor:

Figure 10. Create, edit and apply ACLs

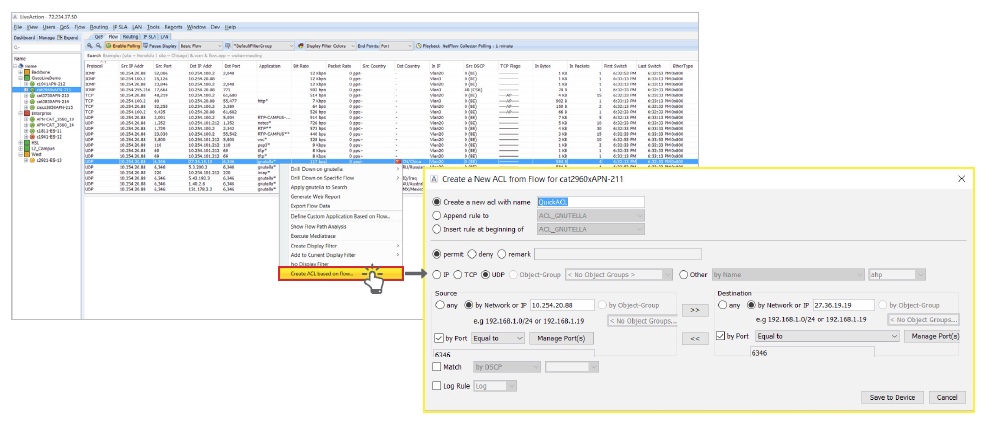

Or ACLs can be instantly created using NetFlow data:

Figure 11. Use NetFlow to create ACLs

QoS Monitoring and Analysis

LiveNX’s QoS monitoring tools will give you access to information that will help you better understand the performance of existing policies, and immediately validate changes made along the way.

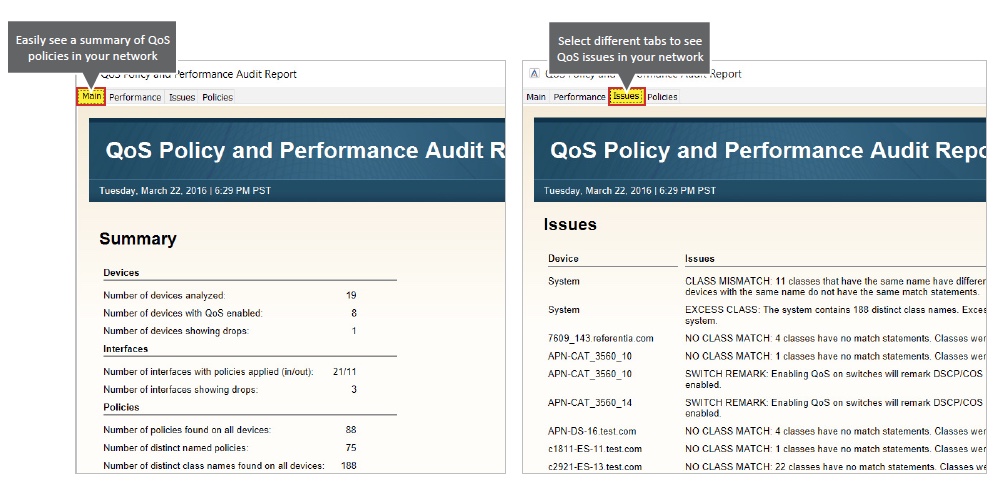

If policies are already in use you can run a QoS Audit Report to quickly identify and understand any performance or configuration issues.

Figure 12. QoS Audit Report automatically summarizes policy issues

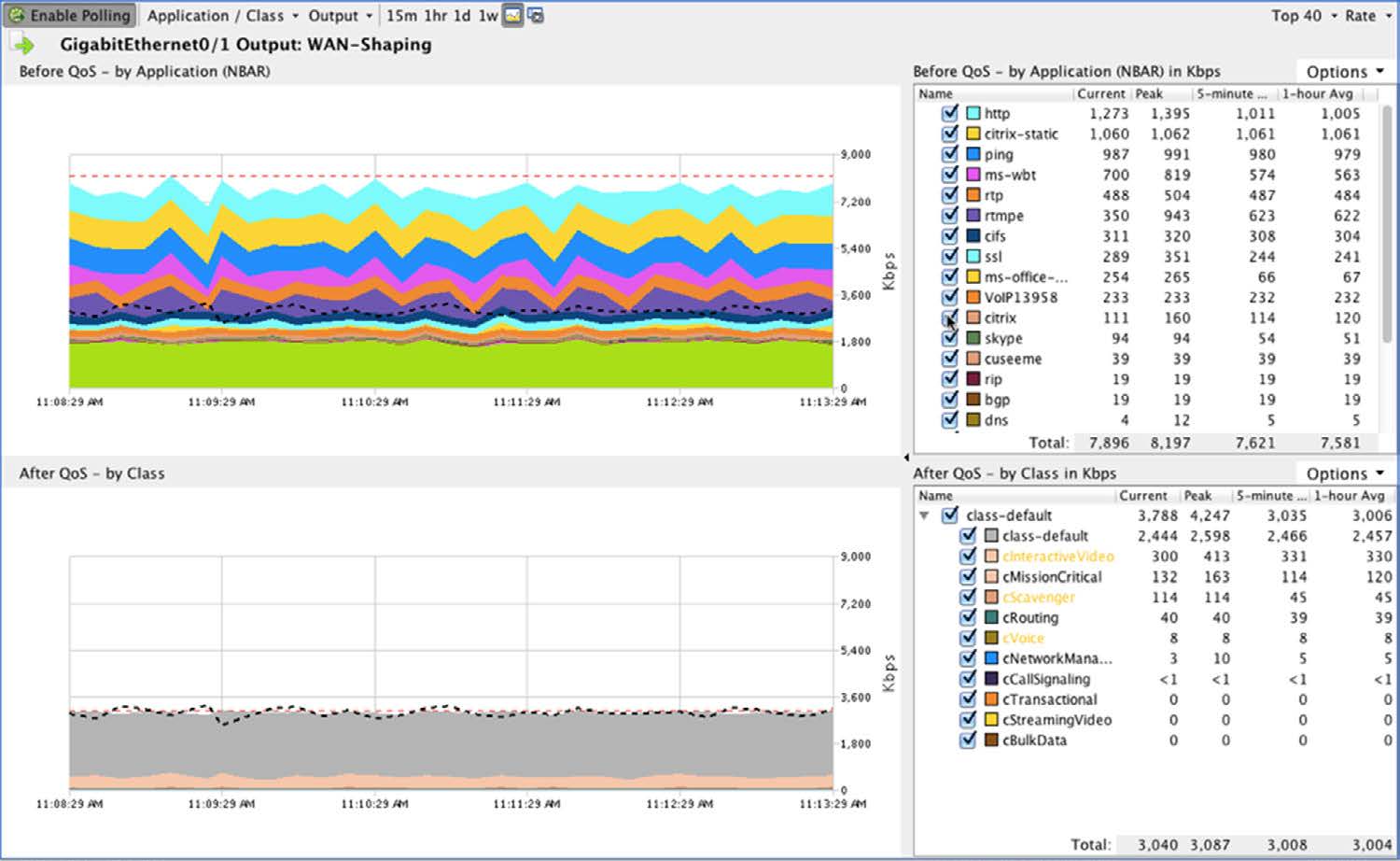

You can view the performance of a QoS Policy on the Input or Output of an interface on a per-class basis and even compare pre- and post-policy performance and NBAR2 application throughput. You can view this information in real time, or on a historical basis using LiveNX QoS Reports.

Figure 13. Viewing real-time results of applied QoS policies

A simplified editor is available for quick adjustment of queue settings and reserve bandwidth adjustments for policies that are actively in use.

Figure 14. Easily view and adjust QoS policies

In addition, troubleshooting and implementation will be much easier with LiveNX’s real-time, system-wide flow visualization. This is critical for quickly gaining end-to-end awareness of the traffic flowing across the network and identifying points of congestion, incorrectly configured services, and invalid traffic.

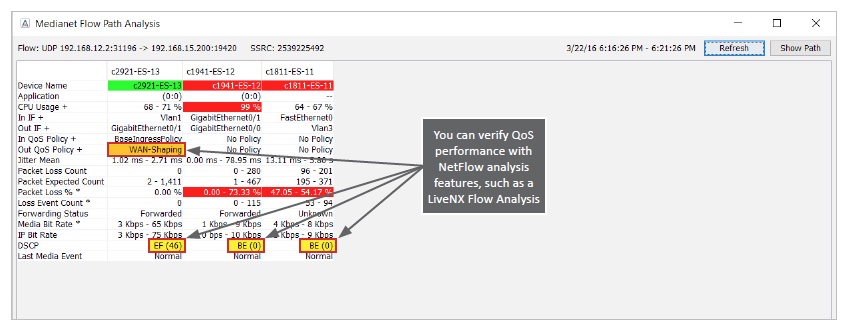

Using LiveNX Flow Path Analysis you can track down a single user’s conversation and view the performance of that conversation hop-by-hop through the network.

Traffic Generation and Analysis

Whether experimenting in the lab or verifying performance in an operational network, LiveNX helps you unlock Cisco’s IP SLA capabilities for traffic generation and analysis. LiveNX helps you quickly set up tests using HTTP, UDP, Jitter, Voice and other traffic types, visualize where those tests are active, and analyze the results.

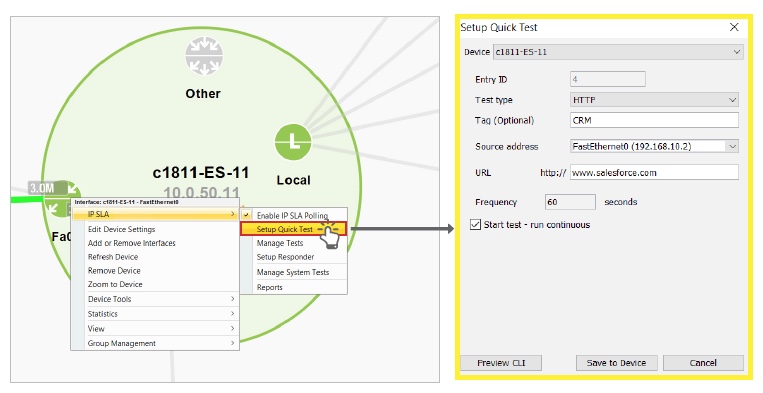

You can easily create an IP SLA test with a right-click on a managed device.

Figure 16. Create an IP SLA test

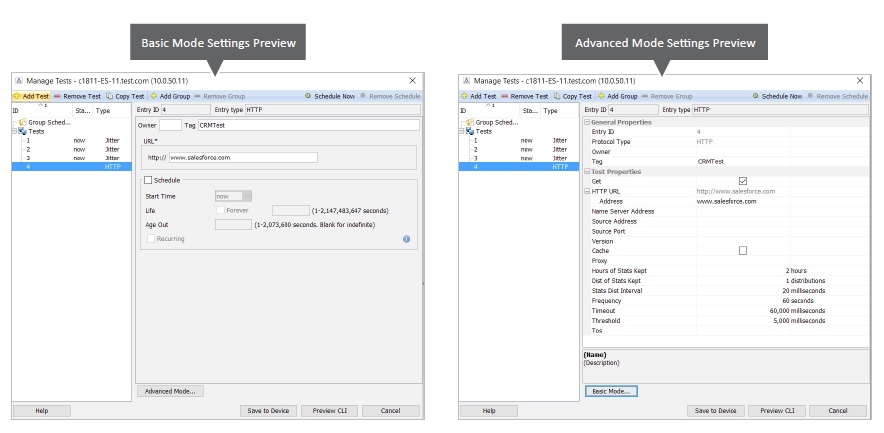

You can then easily manage and adjust IP SLA test settings to meet your network specifications.

Figure 17. Manage tests using basic and advanced test configuration views

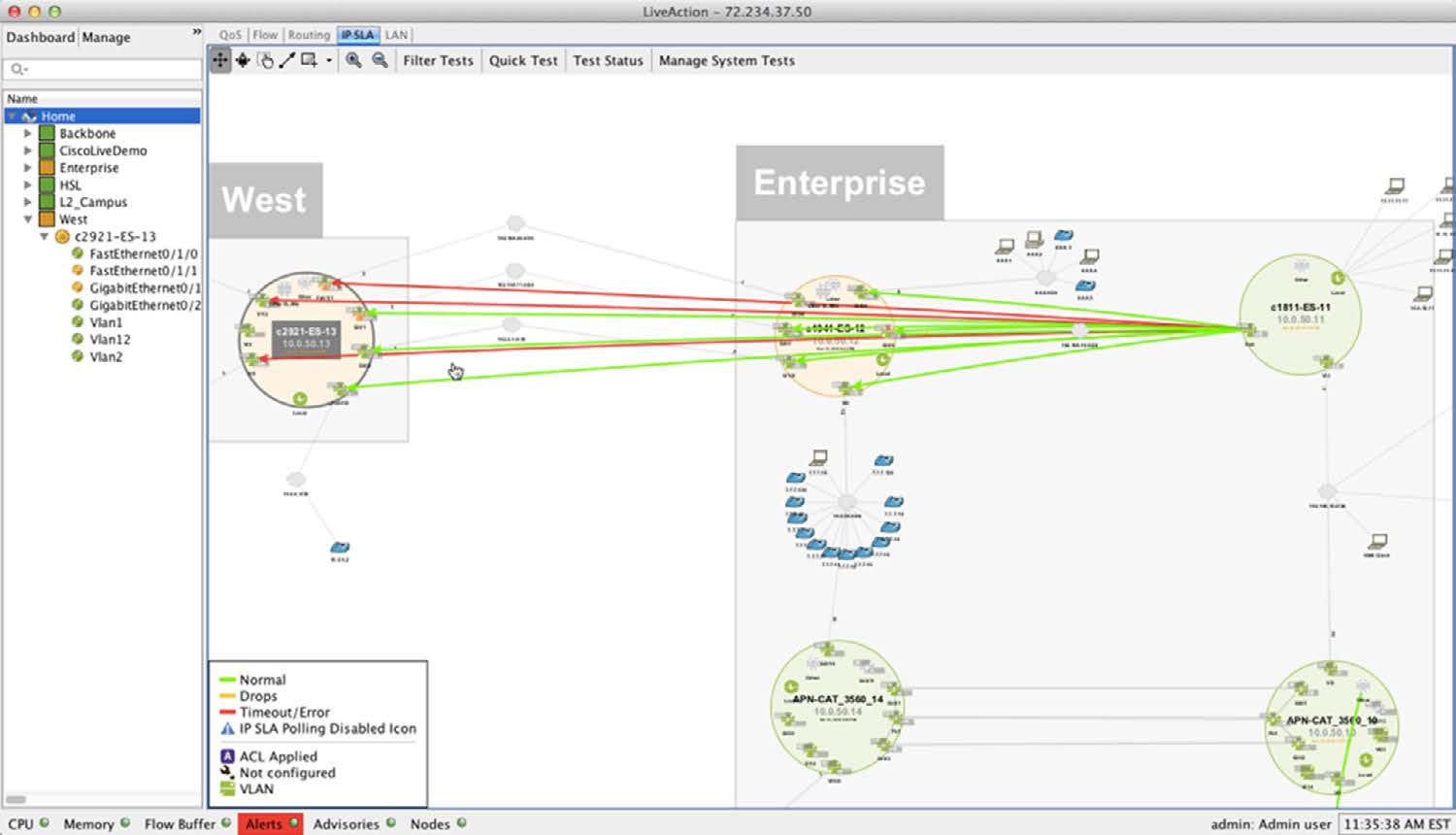

With LiveNX’s visual topology you can easily see the status of an IP SLA test.

Figure 18. View of running traffic tests through network topology

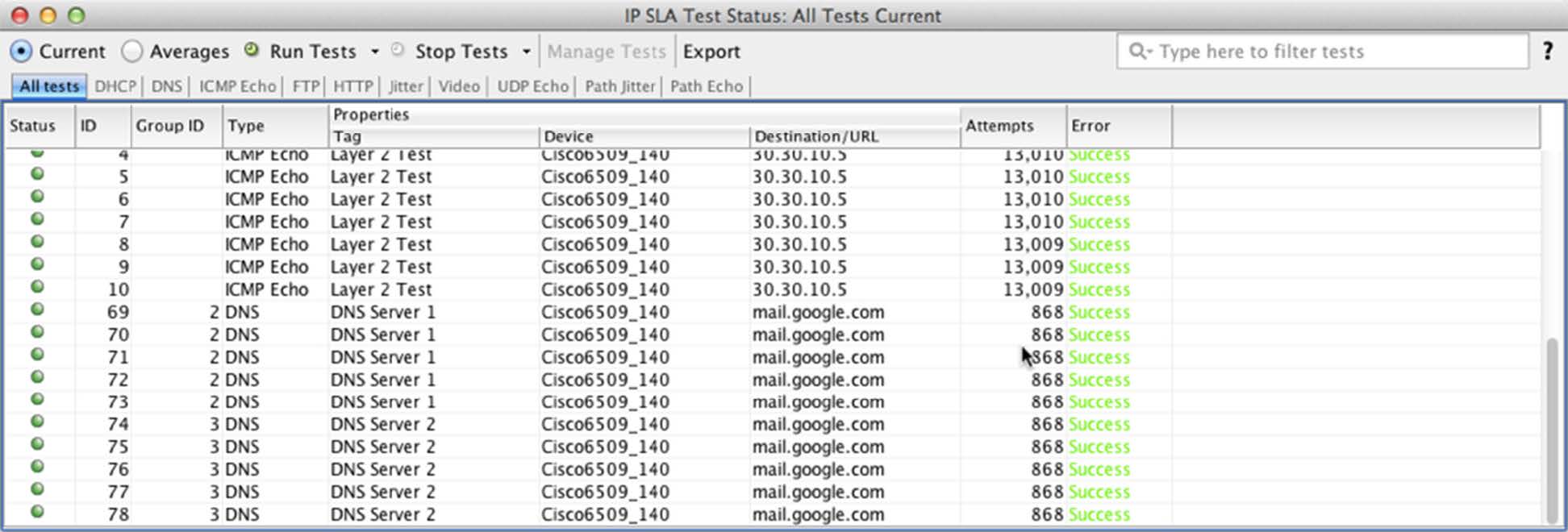

You can bring up the test status for all running IP SLA tests on the topology.

Figure 19. Test traffic status summary table

You can drill down on the data in the summary table to LiveNX IP SLA reports to view the performance of a single test over a selectable period of time.

Figure 20. Analyze IP SLA performance with LiveNX Reports

CONCLUSION

Cloud services can provide immense operational efficiencies for applications such as office productivity, offsite data storage and analysis, and application development and hosting. Whether your company is migrating existing desktop applications to the cloud or introducing new services hosted in the cloud, application changes will drastically affect your network traffic patterns. By planning for these changes and properly implementing QoS for your new cloud services, you can avoid future headaches for both end-users and IT teams. Ultimately, your enterprise will be able to optimize operational efficiencies and user experience to the fullest.

MORE INFORMATION

Find out why—and how—User Experience Monitoring can accelerate problem resolution and simplify your application performance monitoring challenges.

Check out our updated webinar schedule—gain insights from our special presenters about topics like QoS, Hybrid WAN Management, Capacity Planning and more.

Case studies, white papers, eBooks and more are available for your learning on the LiveAction resources page.

LiveNX and LiveUX Downloads

Free downloads of LiveNX and LiveUX are available now. Visit our webpage to discover more details and benefits of LiveNX and LiveUX.

ABOUT LIVEACTION

LiveAction provides comprehensive and robust solutions for Network Performance Management. Key capabilities include Cisco Intelligent WAN visualization and service assurance, best-practice QoS policy management, and application-aware network performance management. LiveAction software’s rich GUI and visualization provide IT teams with a deep understanding of the network while simplifying and accelerating management and troubleshooting tasks.

Download White Paper

Prepare Your Network for the Cloud and Beyond: Managing QoS for your cloud-based applications

About LiveAction®

LiveAction provides end-to-end visibility of network and application performance from a single pane of glass. We provide enterprises with confidence that the network is meeting business objectives, full network visibility for better decisions, and reduced cost to operate the network.